[Video] The HBM4 Infographic: Key Specs and Performance Leap

Samsung Electronics has commenced mass production of HBM4 (6th-generation High Bandwidth Memory) and initiated commercial shipments to customers, marking the industry’s first deployment of this memory standard. The accompanying video details the technical specifications and architectural advances underlying Samsung’s HBM4.

Redefining Bandwidth for Next-Generation AI

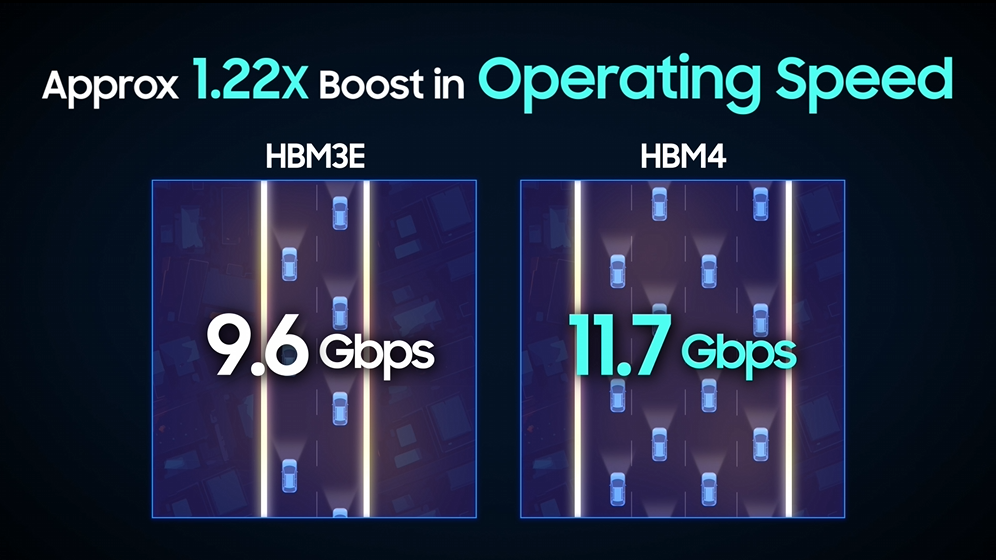

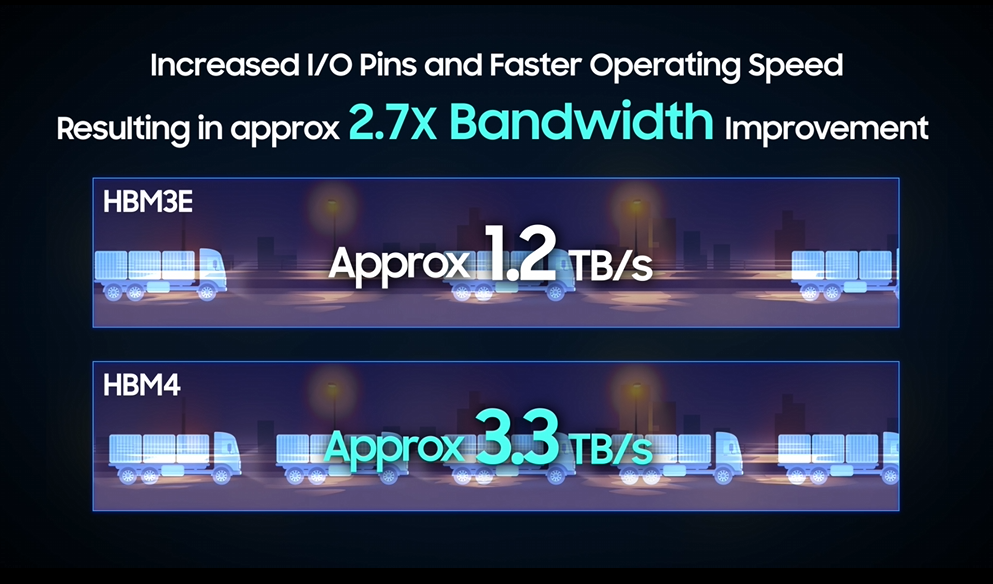

HBM4 features an expanded I/O interface of 2,048 pins — double the 1,024 pins of HBM3E — enabling a base data transfer rate of 11.7Gbps and a peak rate of up to 13Gbps. This translates to a total memory bandwidth of 3.3TB/s per stack, approximately 2.7 times that of HBM3E. For reference, this bandwidth is sufficient to transmit 750 full 4GB movie files per second, a capacity designed to address the data throughput demands of large-scale AI model training and inference.

Integrating Memory and Foundry for Synergy

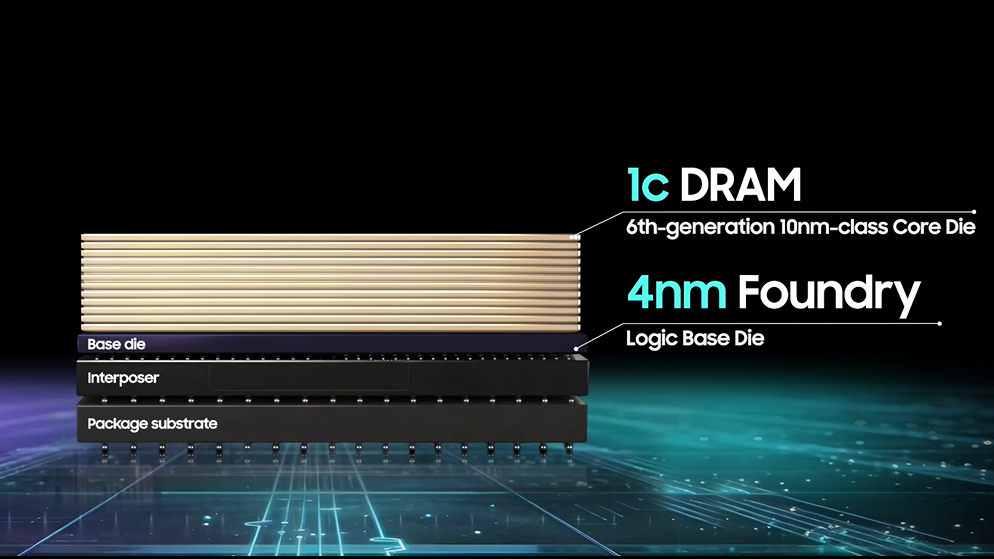

HBM4 combines Samsung’s 6th-generation 10nm-class (1c) DRAM process for the core die with a 4nm logic base die — an integration of the company’s memory and foundry capabilities. This breakthrough is a direct result of Samsung’s unique position as a world-leading IDM (Integrated Device Manufacturer). By harmonizing its memory expertise with foundry capabilities under one roof, Samsung has achieved the interface between memory and logic at a fundamental level.

The Roadmap to Higher Stacking and Expanding Capacity

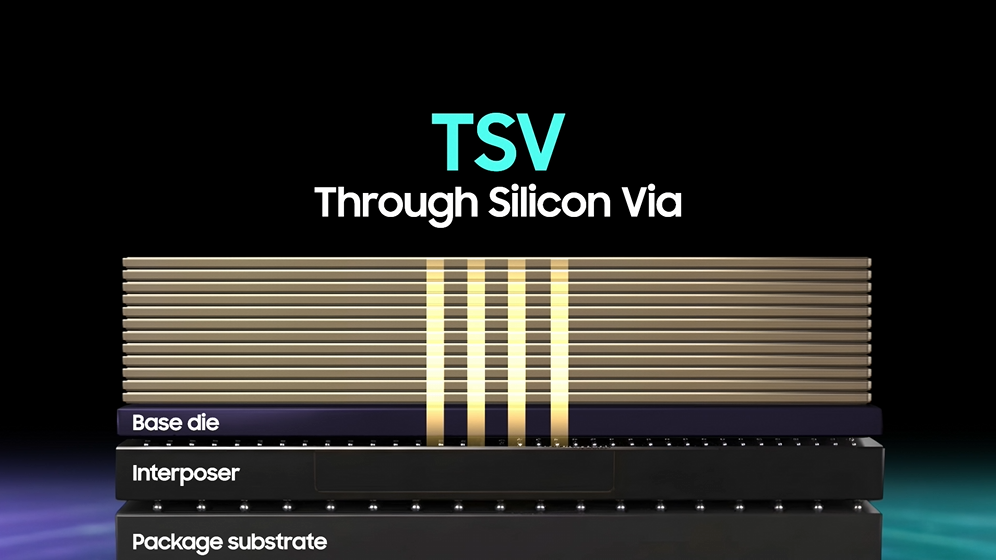

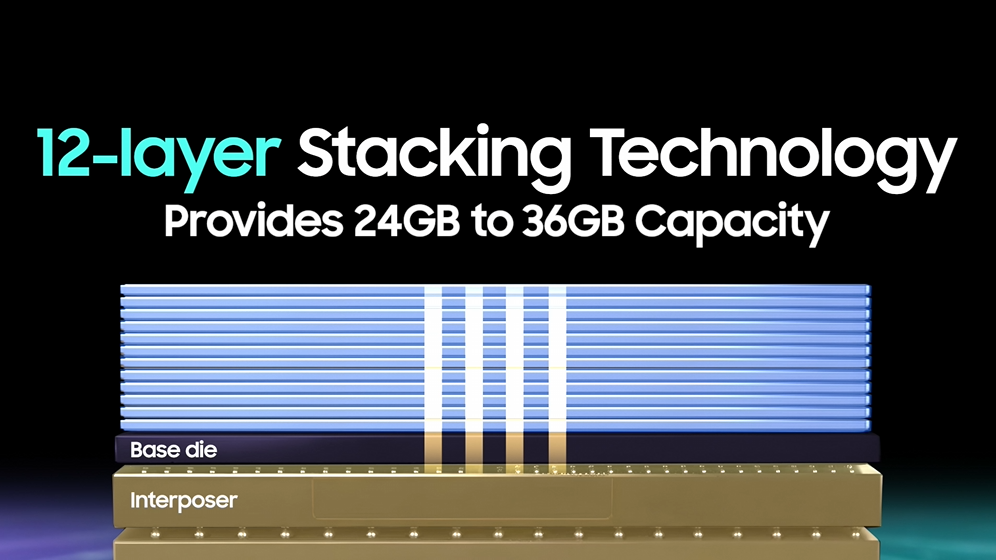

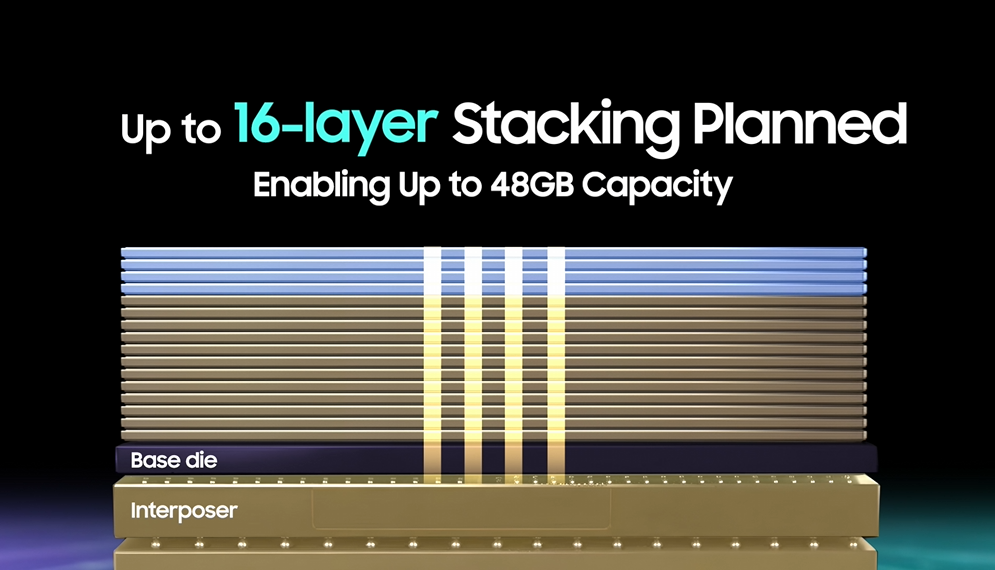

The current HBM4 lineup offers capacities ranging from 24GB to 36GB through 12-layer stacking. Samsung also plans to introduce 16-layer configurations to meet higher-capacity requirements. HBM’s capacity is made possible by Samsung’s Advanced Packaging, which leverages Through Silicon Via (TSV) technology.

Redefining Power Efficiency and Thermal Management

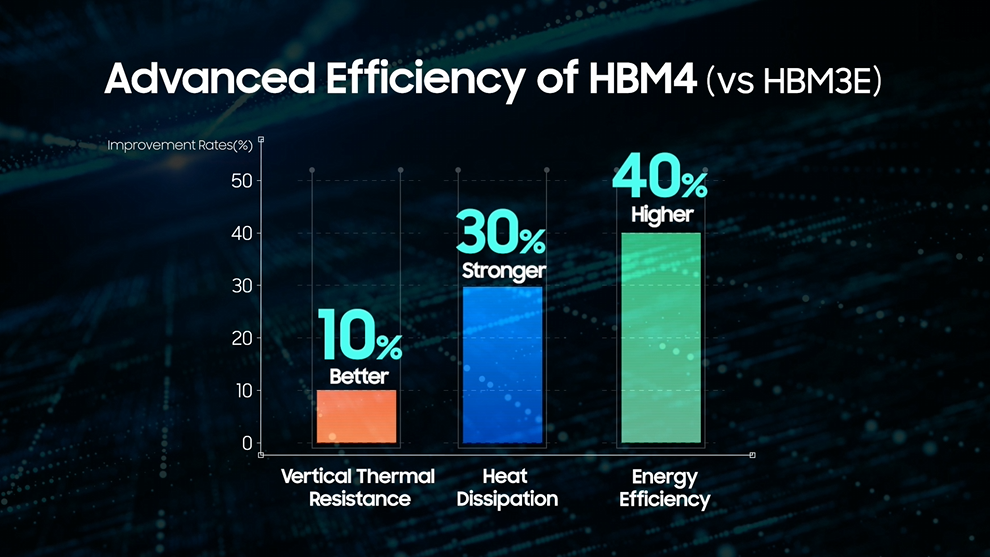

HBM4’s architecture delivers a 40% reduction in power consumption compared to its predecessor HBM3E, significantly lowering the energy demands of AI infrastructure. Thermal performance is equally enhanced, featuring a 10% improvement in thermal resistance and 30% stronger heat dissipation capabilities. These metrics ensure that HBM4 maintains sustained performance under the thermal loads typical of AI datacenter environments.

This video walks through the key design features of HBM4 and highlights its role in Samsung Semiconductor’s broader AI infrastructure strategy.

Related tag

Related Stories

-

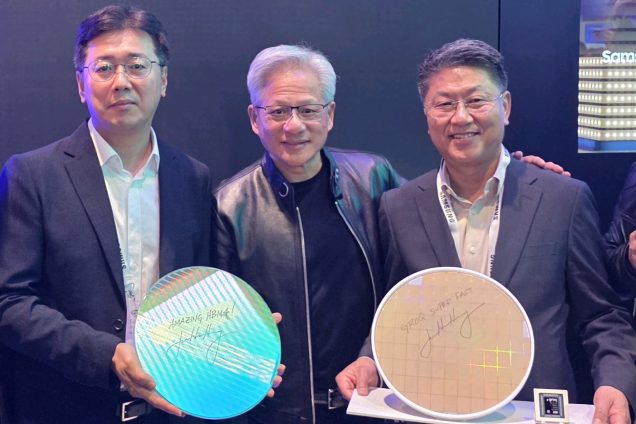

2026.03.17[Video] Architecting the AI Era: Samsung Electronics and NVIDIA Define the Future at GTC 2026

2026.03.17[Video] Architecting the AI Era: Samsung Electronics and NVIDIA Define the Future at GTC 2026 -

2026.03.10Samsung Launches Sokatoa to Enhance GPU Performance Analysis on Android

2026.03.10Samsung Launches Sokatoa to Enhance GPU Performance Analysis on Android -

2026.01.09[CES Innovation Awards® 2026 Honoree] Portable SSD T7 Resurrected: 100% Recycled Aluminum Body Case and Recyclable Packaging, Designed for Circular Resource Use

2026.01.09[CES Innovation Awards® 2026 Honoree] Portable SSD T7 Resurrected: 100% Recycled Aluminum Body Case and Recyclable Packaging, Designed for Circular Resource Use